Jesse Edelstein//February 24, 2026

Migrating a Mainframe... For Fun

Optimized for Desktop

A guide to migrating COBOL code with an agent.

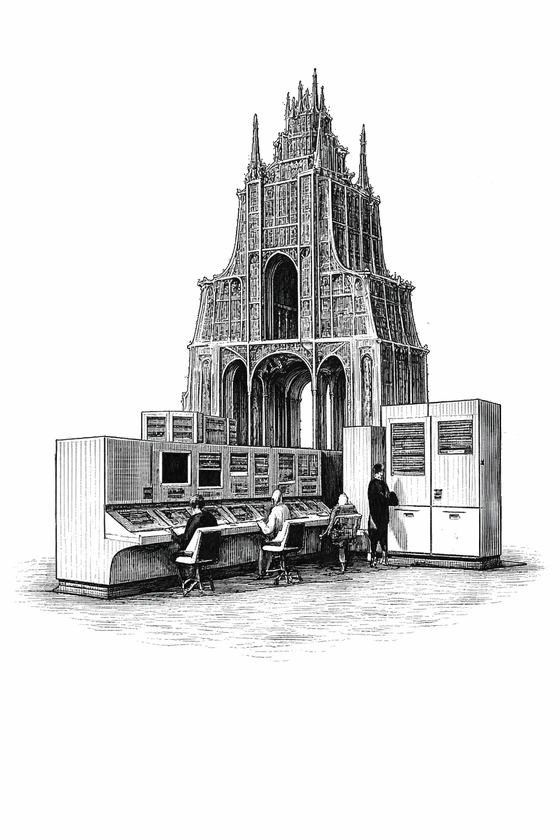

The last time the COBOL programming language was meaningfully updated was in 2002 — I was 12 months old. The only time I have ever seen a real mainframe computer is in a museum. But I recently developed a fascination for these fossils and the programming languages that run on them.

Why do so many legacy organizations still run their mission critical software on mainframes? We are seeing coding agents write 90%+- of code on more complex projects. Yet, if you are a technology leader at a bank, airline or insurer, it seems like your only path to modernization is to hire armies of consultants to place their stamp of approval on the project.

Could it really be all that complex? Or is the complexity just a cover to sell more billable hours? I decided to find out for myself. So I opened up my terminal and set off with the following goal:

Run an end-to-end mainframe to cloud migration writing 100% of code with agents

How Does COBOL Work?

Before getting my hands dirty, I needed to take a quick look at how the COBOL programming language actually works. As an example, I used a demo credit card management application. The app contains 30 programs, each of which manages a different part of the app lifecycle (managing accounts, paying bills, issuing new cards, etc…)

Each program has deep interdependencies with the others, and business logic is entangled with UI navigation. When a user signs in, the login program writes their identity into a shared memory block; the runtime then calls downstream programs with that buffer. Programs don’t declare their dependencies. They just receive bytes in a buffer that everyone trusts were set correctly. This is a pretty significant departure from modern web app architecture.

The challenge: Collapse each interactive terminal program into a single stateless request and make every hidden dependency explicit as input or runtime configuration

Mapping the Codebase

In order to visualize this complexity at a high level, we need a proper map.

To construct this I provided a set of custom skills. Extending an existing COBOL parsing tool I wrote a handful of CLI wrappers:

parse- parse one COBOL file into a dense summary.deps- list program dependencies and calls.detect-inputs- find conversational loops that need refactoring to single-shot.env-vars- extract file-control assignments and env vars.data-ref- find dataset references for data mapping.

The final generate command leverages these tools to produce a rich semantic graph of the programs, files, and control flows in the codebase.

This is the map: a high-level view of the migration surface. A canvas to paint on. Take a look around. All of the threads used for this migration are available for view. What do you notice?

Keeping a Manifest

Now that we have our map we need to create a manifest for how each program will map to its new stateless REST API endpoint. We can use the semantic map to auto-generate a target API schema that maps entry programs to routes and converts implicit dependencies into explicit JSON schema.

I reused scripts from the detect-inputs and env-vars skills to deterministically surface runtime dependencies.

Here are the new API endpoints for the 17 entry programs in the credit card application.

Getting Our Hands Dirty

With a source map and a manifest in hand, it's time to get to work. I pasted the following prompt into an Amp thread and watched it run loose:

Pop the front of the task queue and find the associated program in cbl/. You have the following skills at your disposal: parse, detect-inputs, env-vars, data-ref.

Use parse for individual program summaries, detect-inputs for conversational loop detection and refactoring into single-shot REST-compatible endpoints, and env-vars plus data-ref to map I/O to the target API schema and update handlers accordingly.

The final program should match the target API schema provided in the task description.

After migration, verify the program can handle its stdin/stdout contract:

- Build subroutine dependencies: cobc -m -I cpy/ cbl/SUBROUTINE.cbl -o bin/SUBROUTINE.so

- Compile the program: cobc -x -I cpy/ cbl/PROGRAM_NAME.cbl -o bin/PROGRAM_NAME

- Pipe test input and check output: echo "LOGIN|USER0001|PASSWORD" | COB_LIBRARY_PATH=./bin USRSECFILE=data/USRSEC.dat ./bin/PROGRAM_NAME

- Verify stdout contains "SUCCESS:" and the expected pipe-delimited fields

DO NOT MARK A TASK AS COMPLETE UNLESS:

1. The program compiles without errors

2. A stdin smoke test produces the expected stdout output

If you run into any issues, add an annotation to the graph.

Once complete, hand off to a new thread for each ready task

For the first round each agent has a two-step feedback loop: the GNUCobol compiler confirms the program is syntactically valid, and a stdin→stdout smoke test confirms it produces the right output against real data files.

Assembling the Puzzle

Each program compiles and passes smoke tests in isolation. Now we need to assemble the system and verify end-to-end behavior under the new API contract.

All programs have been individually tested. Now assemble and validate the full application.

1. Write a build script that compiles all shared subroutines as loadable modules first, then compiles every main program as a standalone executable. Run it and fix any unresolved symbols, missing COPY members, or LINKAGE SECTION mismatches.

cobc -m -I cpy/ cbl/SUBROUTINE.cbl -o bin/SUBROUTINE.so

cobc -x -I cpy/ cbl/PROGRAM.cbl -o bin/PROGRAM

2. Using the OpenAPI schema as your contract, write an Express server that maps each endpoint to its compiled COBOL binary. For each request, spawn the binary as a child process, pipe a delimited stdin line derived from the JSON request body, and parse the structured stdout back into a JSON response.

3. Write a smoke test suite that hits every endpoint in the OpenAPI schema and verifies the HTTP status codes and response shapes match the spec.

DO NOT MARK INTEGRATION AS COMPLETE UNLESS:

- The full build completes with 0 failures

- All smoke tests pass against the OpenAPI schema

- If you run into issues, annotate the build task with your notes and mark as in-progressThis is the moment of truth. If the application stands up and the new API endpoints return expected outputs, we’re off to the races.

Moving Day

Now that the full app runs without proprietary runtime dependencies, it needs a new home. I included the API schema in the prompt and tasked the agent with building a React frontend wired up to the new endpoints. Then I had it package the entire system into a Docker container for deployment.

The final application is now running in the cloud. Try it directly below.

What’s Next?

I have to admit, this all went a lot smoother than expected. I was sure that somewhere along the way my hubris would catch up to me and I would be left sweeping up the slop. But it just worked!

So what does this mean? Are large scale migrations a solved game? Is AGI here?

OK, maybe not, but I do have a few thoughts on why it went so well:

- This kind of migration has super dense and reliable feedback loops. The agent rarely has to guess what to work on. The environment exists to steer the agent towards the relevant problem.

- The application I chose has very little dead code. Its really hard to find public repos that closely mirror a real COBOL runtime. In the field we might need to spend twice as much time triaging code before the migration even kicks off.

Regardless, the same thought kept haunting me as I watched the factory run:

If you are still following the old mainframe modernization playbook you are falling behind

“AI enabled” consultants won’t fix this. Specialized models from vendors wrapped in a nice bow won’t save you. You need to burn the playbook. Everything is changing.